AMD (Xilinx)

VCK5000 Versal Development Card

The AMD VCK5000 Versal development card is built on the AMD 7nm Versal adaptive SoC architecture and is designed for (AI) Engine development with Vitis end-to-end flow and AI Inference development with partner solutions.

Código de artículo VAR-827001490

Número de pieza del fabricante: DK-VCK5000-G-ED

Código aduanero: 8471500000

Artículo ubicado en y enviado desde: Riedlingen, Alemania

Product Overview

The AMD VCK5000 Versal development card is built on the AMD 7nm Versal adaptive SoC architecture and is designed to optimize 5G, data center compute, AI, signal processing, radar, and many other applications. Fully supported by Vitis , Vitis AI, and partner solutions like Mipsology Zebra and Aupera VMSS, the VCK5000 domain-specific architecture brings strong horsepower per watt while keeping ease-of-use in mind with C/C++, software programmability.

Delivering the near 100% compute efficiency per watt in standard AI benchmarks and 2x TCO compared to the flagship nVidia GPUs, the VCK5000 development platform is ideal for CNN, RNN, and NLP acceleration for your cloud and edge applications.

AI Inference Development

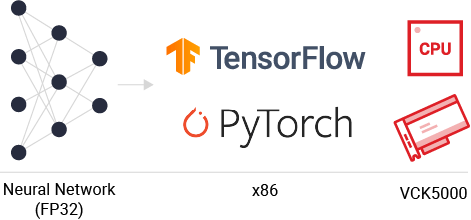

If you are an AI developer, bring your TensorFlow and PyTorch trained models to directly infer on Versal using Mipsology Zebra and build, configure, and deploy computer vision applications on FPGA platforms with Aupera Video Machine Learning Streaming Server solution.

Key Features

Explore partner solutions and articles, and learn about the key features for AI Inference Development with the VCK5000

.png)

.png)

.png)

.png)

.png)

2x TCO Reduction vs Mainstream nVidia GPUs

- 2x perf/w and perf/$ compared to Nvidia Ampere with standard MLPerf Models

- Achieves 90% compute efficiency

- Consume less than 100W at card level

.png)

.png)

.png)

.png)

.png)

2x End-to-End Video Analytics Throughput vs nVidia GPUs

- Full pipeline from H.264 decode to computer vision to up to 10 AI models

- Video decode and CV run on x86 CPU or discrete U30 Alveo card

- Plug-in based pipeline composition from FFmpeg / Gstreamer

ML Heavy: H.264 Decode + Yolov3 + 3x ResNet-18

Video Heavy: H.264 Decode + tinyYolov3 + 3x ResNet-50

Easy to Use with Familiar Frameworks

- Easy-to-use software flow for any CPU & GPU users, no hardware programming required

- Run inference from Tensorflow framework directly on board

- State-of-the-art model supported with mainstream frameworks PyTorch, TensorFlow, TensoFlow 2 and Caffe

Partner Solutions

Mipsology Zebra AI Inference Solutions & Aupera Video Machine Learning Streaming Server